Why Traditional Safety Falls Short

Safety is a universal priority in construction, but accidents continue to plague the industry. Despite regulations and inspections, human error remains the weak link. AI now provides the tools to monitor, predict, and prevent risks at scale. At Wenti Labs, we integrate AI-powered safety checks into daily workflows, ensuring compliance is continuous — not just a checkbox.

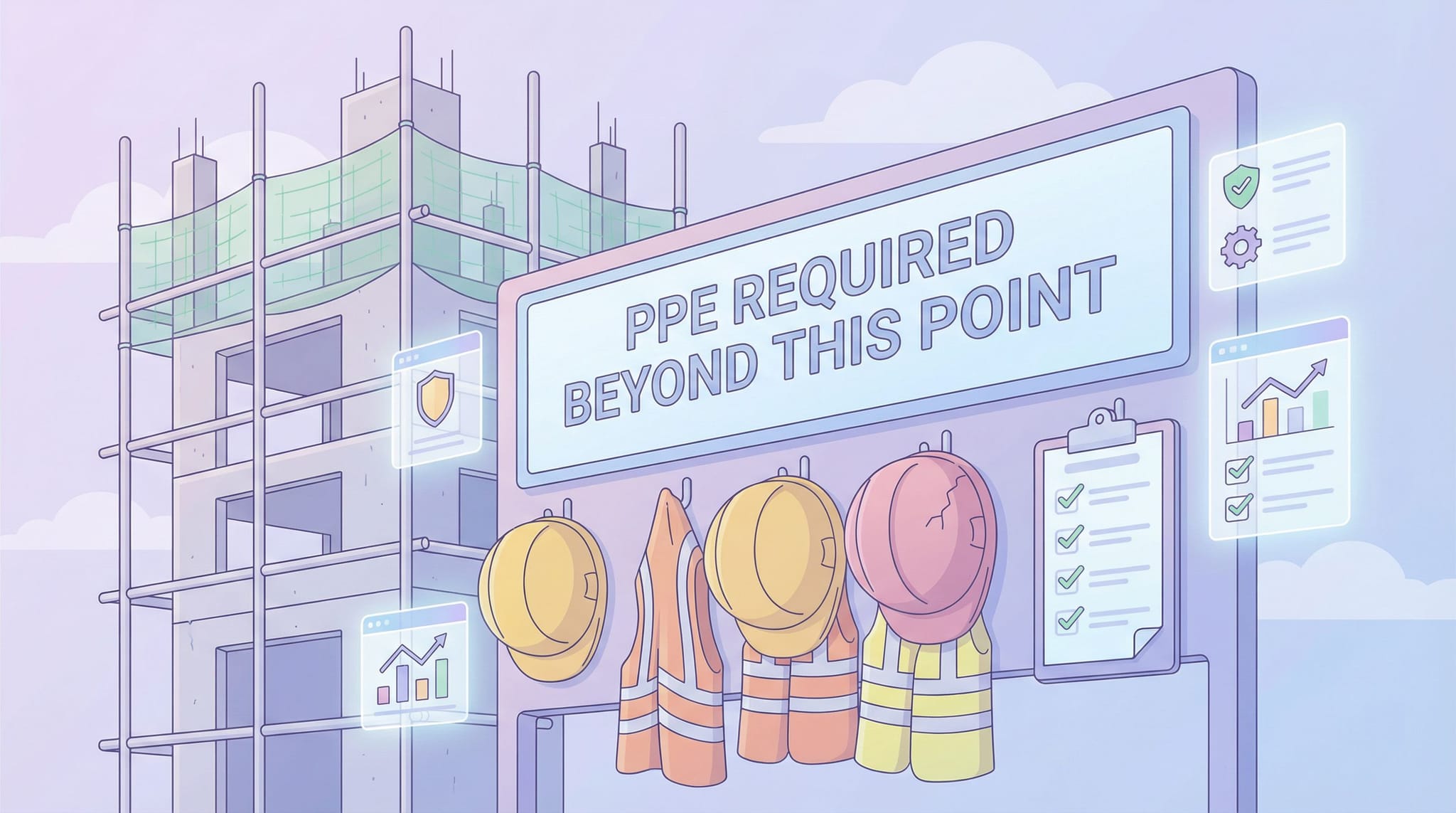

Construction remains one of the world's most dangerous industries. Despite strict regulations, manual safety inspections cannot cover every hazard. Common problems include:

- Human error in detecting risks.

- Reactive safety culture, where accidents prompt fixes.

- Scattered reporting across forms, apps, and chats.

The result: delayed interventions and avoidable accidents.

This is where AI agents in construction are making the biggest difference — not by replacing safety officers, but by giving them real-time visibility they've never had before. According to the International Labour Organization, over 60,000 fatal accidents occur on construction sites each year. Many are preventable with earlier detection.

The Gap in Traditional Safety Monitoring

The Gap in Traditional Safety Monitoring

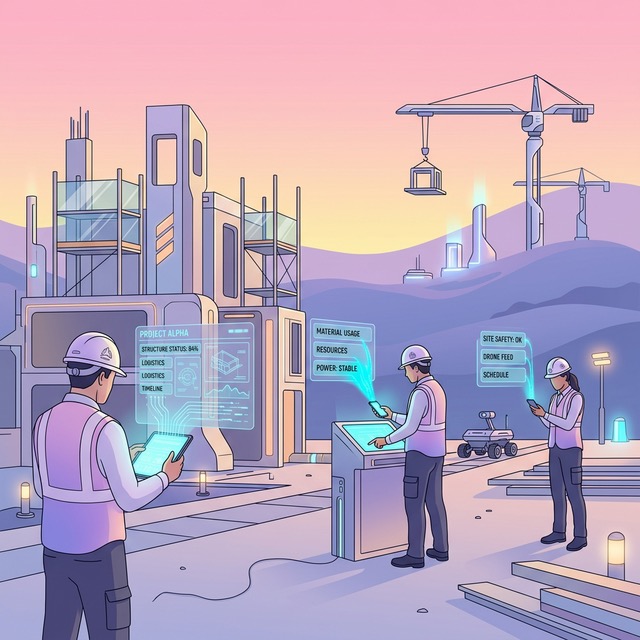

How AI Elevates Safety Standards

AI turns safety into a continuous, proactive process. Rather than relying on periodic walkthroughs, AI agents for construction safety work around the clock — processing images, flagging anomalies, and escalating risks before they become incidents.

Key capabilities:

- Computer Vision: Detects missing PPE, unsafe scaffolding, or workers without harnesses in real time.

- Predictive Analytics: By analyzing historical incident data, AI highlights high-risk areas before they become accidents.

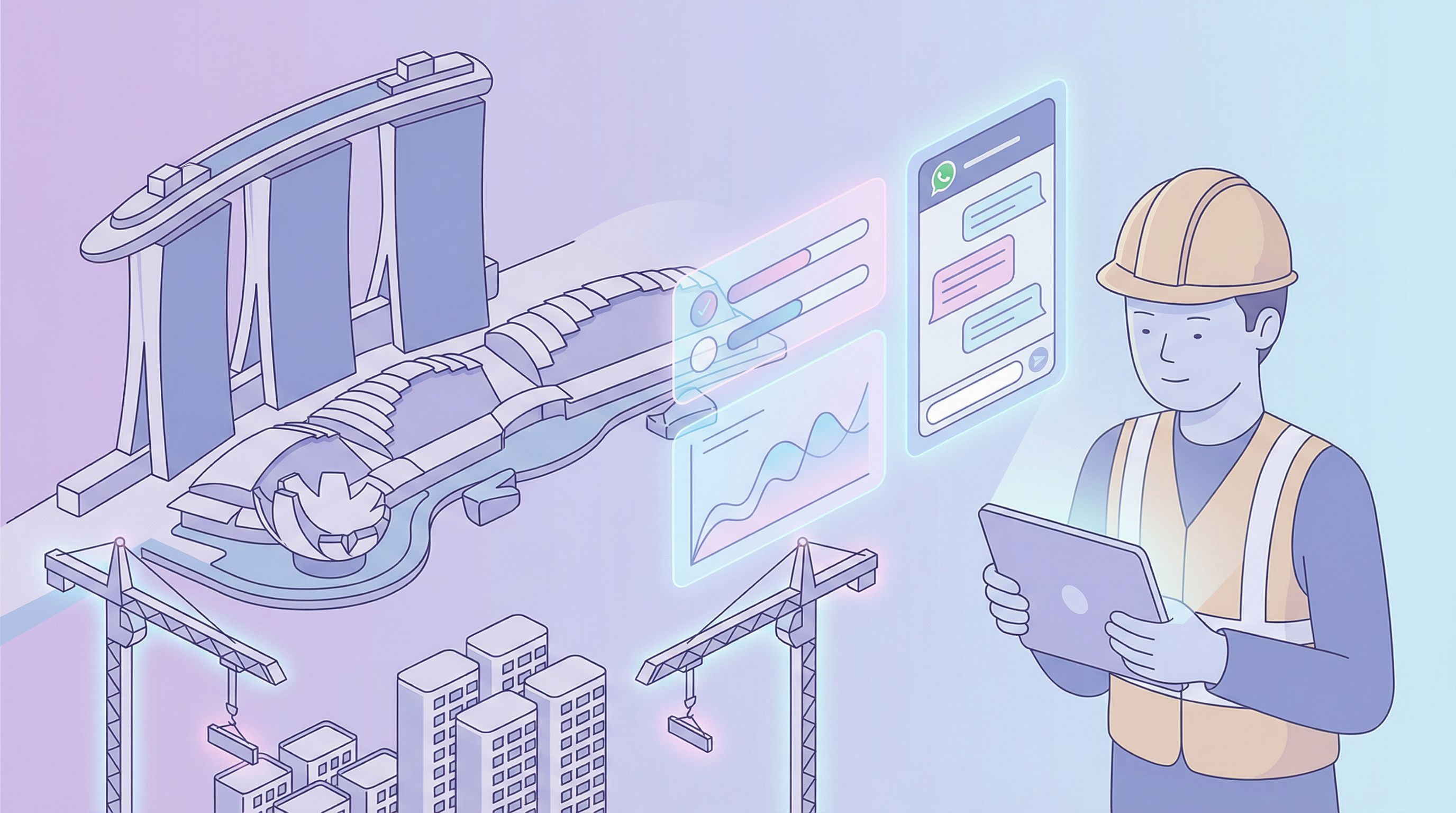

- Automated Reporting: WhatsApp site photos and notes are converted into compliance-ready logs automatically.

Vision Pipeline

Vision Pipeline

These capabilities integrate into dashboards that display:

- Live risk flags from AI

- PPE compliance rates

- Heatmaps of unsafe zones for proactive interventions

This is what separates modern construction AI from legacy safety software — the ability to act on data as it arrives, not days later in a spreadsheet.

Wenti Labs' Approach to Smarter Safety

Our focus is on frictionless adoption. Workers simply snap photos via WhatsApp; AI processes them, flags issues, and alerts supervisors instantly. No training, no extra apps — just real-time intelligence woven into everyday routines.

This is the core principle behind our AI agents in construction: meet teams where they already work. Instead of asking site crews to learn new tools, our agentic AI extracts safety-relevant data from the messages and photos they're already sending.

The Impact:

- Fewer delays from manual reporting.

- Faster action on high-risk issues.

- Improved compliance for audits.

AI transforms safety from a reactive obligation to a proactive shield for workers.

Frictionless Safety Reporting in Action

Frictionless Safety Reporting in Action

The Business Case for AI-Driven Safety

Beyond saving lives, AI-powered safety has a direct impact on project economics. Workplace incidents cause schedule delays, regulatory fines, and insurance cost spikes. Projects with strong safety records consistently deliver on time and under budget.

By deploying AI agents for construction safety, teams can:

- Reduce incident rates and the rework that follows

- Maintain audit-ready documentation without manual effort

- Lower insurance premiums through demonstrable risk reduction

The ROI isn't hypothetical — it shows up in fewer stop-work orders, faster close-outs, and stronger client confidence.

In Summary

Construction safety can't afford to stay manual. The data is already being captured on every job site — in photos, messages, and daily logs. The missing piece is an intelligent layer that structures and acts on that data in real time.

At Wenti Labs, we build AI agents for construction that turn everyday site communication into automated safety intelligence. No new apps, no behaviour change — just smarter, safer sites.

If your team is looking to improve safety outcomes without adding overhead, let's talk.

See how construction teams are using AI agents to capture real site data without changing their workflows — read our case studies.